How To Combat Twitter Bots And Twitter Misinformation

The spread of fake news on Twitter continues to be a problem for brands, politicians, and everyday users. Here’s what to know about Twitter misinformation.

When Elon Musk announced his intention to take over Twitter in early 2022, it seemed like a done deal. Soon, however, Musk hit the brakes on the acquisition deal — citing the prevalence of Twitter bots as a concern.

Twitter claims that less than 5% of its daily active users are spam accounts. Musk, however, appears to believe that percentage is as high as 20%.

“My offer was based on Twitter’s SEC filings being accurate,” Musk tweeted. “Yesterday, Twitter’s CEO publicly refused to show proof of <5%. This deal cannot move forward until he does.”

Twitter’s CEO, Parag Agrawal, says that Twitter suspends “over half a million spam accounts every day.” But, regardless of the actual ratio of bots to real users, Twitter is widely seen as a primary source of misinformation and disinformation — by humans as well as bots. Twitter misinformation has been a problem for brands since the platform’s founding. Its role in spreading fake news, conspiracy theories, and harmful information is something that companies of all sizes need to recognize — and combat.

Twitter misinformation: the bots

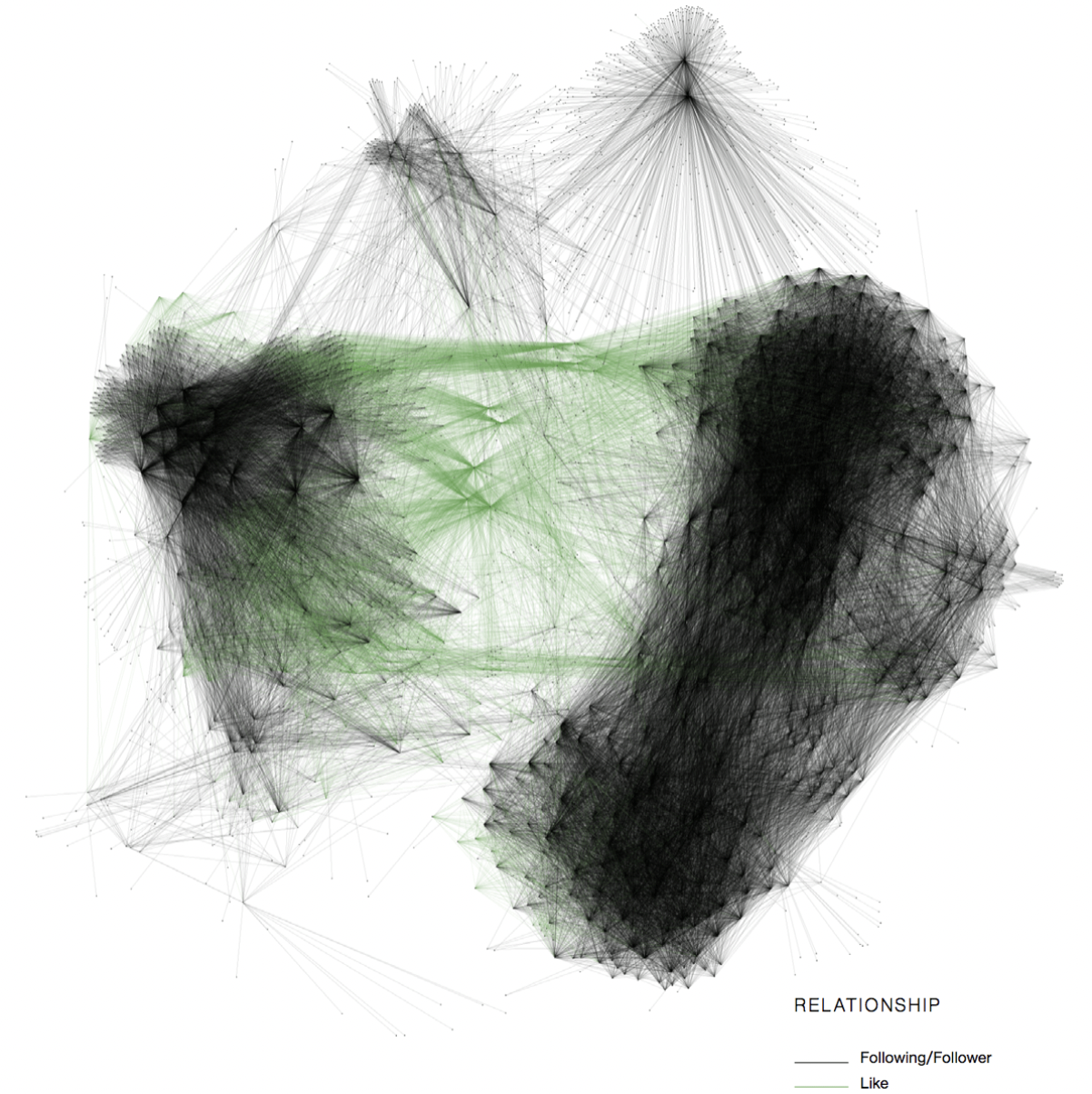

Twitter is notorious for spreading misinformation, and much of it originates from bots. Research from Jordan Wright and Olabode Anise identified three main categories of bots that take advantage of Twitter to spread fake news and false information. These categories include:

- Spambots: accounts that share spam links (e.g., unwanted advertising)

- Fake followers: accounts that do not tweet, but instead follow a large number of accounts

- Amplification bots: accounts that simply retweet, like, or reply to tweets from other bot accounts

Wright and Anise tested their research on a cryptocurrency scheme, tracking accounts that impersonated verified Twitter users and said they would offer free cryptocurrency (but really rob people). By following the scheme, Wright and Anise found three bot types working together to target individuals.

Understanding how different types of bots work to share and boost false messages is necessary to plan your defense against Twitter misinformation. But, bots aren’t the only issue with Twitter that brands need to consider.

Twitter misinformation: real users

Musk’s campaign to “defeat the spam bots or die trying!” really only addresses part of the problem. In fact, misinformation is commonly spread by real people — both inadvertently and intentionally.

A new study shows that while bots are an issue, people are the “prime culprits” when it comes to spreading misinformation through social networks. Disinformation originated as a way for state actors to conduct espionage and spread propaganda. Today, analysis of a Twitter data set showed that 126,000 news items were shared 4.5 million times by 3 million people — and false news stories routinely reached well over 10,000 people.

Digging into the data further, researchers discovered that those sharing false news stories didn’t necessarily have a large number of followers. Rather, it’s the content of the tweets that caused them to spread more quickly.

“As it turned out, tweets containing false information were more novel—they contained new information that a Twitter user hadn't seen before—than those containing true information,” wrote Science Journal. “And they elicited different emotional reactions, with people expressing greater surprise and disgust. That novelty and emotional charge seem to be what's generating more retweets.”

In fact, tweets containing false information reach 1,500 people on Twitter six times faster than tweets with real information.

What does this research tell us? Combatting misinformation on Twitter requires understanding why disinformation campaigns are so effective, as well as targeting spam bots. While bots are comparatively easy to mitigate, it’s real people who are aiding and assisting the false narratives of disinformation campaigns.

How to combat Twitter misinformation

Clearly, it’s difficult to combat the spread of Twitter misinformation. There are over 500 million tweets generated every day, and one brand can’t possibly counteract the spread of fake news in real time.

However, there are ways to manage the spread of disinformation that could negatively impact your brand. The first is to use Twitter sentiment analysis. Media monitoring tools like PeakMetrics can analyze Twitter in real-time to track mentions of your specific company or industry. PeakMetrics goes beyond simple social listening to provide additional information such as the context in which the mentions were made and a link to the source.

PeakMetrics also uses machine learning to predict how a message will spread and develop online. PeakMetrics has been battle-tested on some of today’s most complex media issues – from responding to crisis management situations to combating state-sponsored disinformation. And, because PeakMetrics is able to monitor TV, radio, podcasts, and other forms of content, brands can develop a crisis response that limits the spread of Twitter misinformation before it gets out of hand.

Twitter’s crisis misinformation policy

Separately, brands need to monitor how Twitter’s approach to content moderation is changing to lessen the impact of spam bots. In May 2022, Twitter announced its new crisis misinformation policy.

“To reduce potential harm, as soon as we have evidence that a claim may be misleading, we won’t amplify or recommend content that is covered by this policy across Twitter – including in the Home timeline, Search, and Explore. In addition, we will prioritize adding warning notices to highly visible Tweets and Tweets from high profile accounts, such as state-affiliated media accounts, verified, official government account,” wrote Twitter.

The news release noted that Twitter is trying to find a balance between reflecting free speech and protecting users from false information, and as such will likely continue to evolve its policies over time. For brands, this means the onus of managing the risk of false information still falls on the company itself: once a message is out there, it’s unlikely that Twitter will remove it completely.

Bottom line: constant vigilance is required to combat Twitter misinformation spread by Twitter bots and users. While the platform is taking steps to reduce disinformation campaigns, brands should have their own plan to address the flow of false news and protect consumer trust.

To learn more about PeakMetrics’ tools, request a demo with one of our experts.

Featured image source: https://unsplash.com/photos/IrkHdv88Xp8

Request a free report

Uncover emerging narratives around your brand, industry, and competition.

Sign up for our newsletter

Get the latest updates and publishings from the PeakMetrics investigations team.